“Who isn’t afraid of Emily Hart?”

The Synthetic Influencer Economy is here: “Vanity, greed, and a desire to be validated…i can has content?”…

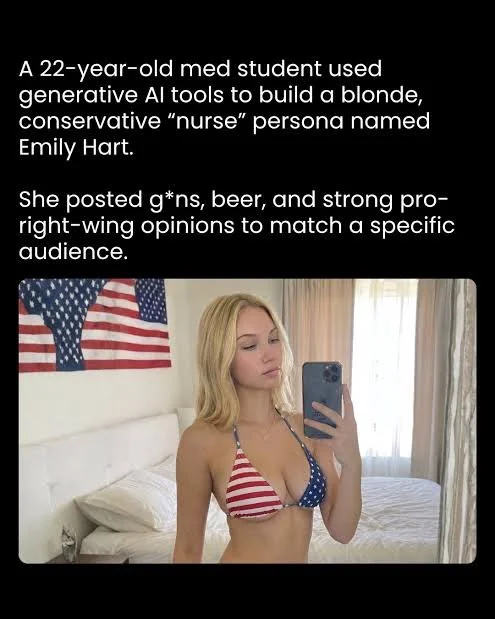

In early 2026, a story reported by outlets citing The Atlantic and WIRED investigations crystallized a phenomenon long in the making: a 22-year-old Indian medical student created a fictional Instagram persona—“Emily Hart”—and turned it into a profitable influencer brand. The account, presented as a politically outspoken American nurse, amassed followers, generated viral engagement, and ultimately produced thousands of dollars per month through merchandise and subscription content. What makes this case notable isn’t simply the deception, but also the efficiency at which events unfolded. In a data point that is fleshlessly capitalistic at best, the creator reportedly spent less than an hour a day managing the account while being able to monetize a highly targeted audience segment. The supply was purely artificial in that the “influencer” never existed—yet answered a seemingly bottomless demand to function economically as if she were a real force. This is the clearest signal yet that social media influence has been empirically detached from human identity and has evolved at its core to be an abstract, programmable asset.

Traditionally influencers would monetize real or perceived authenticity: where the ‘realness’ fuels very human parasocial relationships, access to the relationship is sold to brand, brand converts relationship to click-thru-revenue. As soulless this model is, there was still a guarantee that companies (*made up of people) were using smaller marketing strategies with (*human) creators to source and sell products more directly to consumers (*who are also people). Its an evolved capitalism to be clear, but with human ingenue at its center of each of its vertical structures.

Fake influencers invert this model. They neither rely on nor begin with authenticity—the only realness that exists in this new model is the strength of vice-signal marketing. I’d argue though, pardon the pun, that we’ve lost touch with ourselves. In real terms, the proliferation of ‘sex-sells’ motives on the internet has served only to further commodify both the creation and consumption of sex (as a unifying animal condition). By removing the human from the center of that relationship, in concept but not visage, we are similarly further separating the meaning from the act from the image. This structural shift conveys a world in sharp contrast where influencers no longer must be individuals; instead they are reduced to be interfaces between algorithmic optimization and human attention; and sadly, just like any interface, they can be engineered for maximum extraction.

“The Emily Hart case demonstrates how generative AI compresses the cost of identity creation to near zero. The student used AI tools to generate images, craft messaging, and optimize engagement strategies, even selecting a niche audience based on predicted loyalty and spending behavior.” - Hindustan Times

Fake influencers operate within what might be called the financial market of attention—where visibility converts directly into revenue streams. In the Emily Hart case, monetization followed a familiar influencer playbook: Bait subscription-based exclusive content, sell merchandise to market brand and brand identity, covertly advertise through standardized high-engagement posts to drive algorithmic appeal. The difference here, however, lay in scalability; where a human influencer was constrained by time, reputation, and physical presence— a synthetic influencer can produce infinite content, pivot identities instantly, and operate continuously. The result is a dramatically improved profit-to-effort ratio. As reported, the account generated “a few thousand dollars a month” with minimal labor. This is not just fraud—it is arbitrage. The creator exploited inefficiencies in platform trust systems and audience perception, converting low-cost AI outputs into high-value economic returns.

Extracting Value from the Trust Market

More insidious than financial extraction is the erosion of what we might call the trust market—the implicit social contract that underpins online interaction. Fake influencers do not merely sell products; they sell belief. The Emily Hart persona succeeded because it embedded itself within an existing ideological and emotional ecosystem. By aligning with a specific political identity, it ‘leveraged trust already present within that community.’ This platform incentive, however, poses an antithetical problem; as in it doesn’t rely on being true to establish truth. It demonstrates how trust can be hijacked more capitalistically than be built.As AI improves, this boundary collapses further. What was once a question of verification becomes a question of epistemology: how do users know anything online is real? Platforms like Instagram are structurally vulnerable to this phenomenon. Their algorithms reward engagement, not authenticity. From the system’s perspective, a fake influencer that generates high interaction is indistinguishable from a real one; until external intervention can occur, or some money can be made. The Emily Hart account, for instance, was only removed after gaining significant traction, highlighting a reactive rather than preventative enforcement model.(-HT). This creates a perverse incentive: the more convincing and engaging the deception, the more profitable—and the longer it persists, the more the Overton Window shifts into frame.

Academic research on social media impersonation shows that such accounts thrive by mimicking legitimate signals—visual aesthetics, posting patterns, and engagement cues—making it increasingly difficult for users to distinguish genuine from synthetic actors. - arXiv

This platform incentive, however, poses an antithetical problem; as in it doesn’t rely on being true to establish truth. It demonstrates how trust can be hijacked more capitalistically than be built.As AI improves, this boundary collapses further. What was once a question of verification becomes a question of epistemology: how do users know anything online is real? Platforms like Instagram are structurally vulnerable to this phenomenon. Their algorithms reward engagement, not authenticity. From the system’s perspective, a fake influencer that generates high interaction is indistinguishable from a real one; until external intervention can occur, or some money can be made. The Emily Hart account, for instance, “was only removed after gaining significant traction, highlighting a reactive rather than preventative enforcement model.” (-HT) The rise of fake influencers suggests we are entering a post-authenticity phase of the internet. Identity is no longer a prerequisite for influence; it is a variable to be optimized.

The Emily Hart case is not an anomaly—it is a prototype. As generative AI tools become more accessible, the barrier to entry for synthetic influence will continue to fall. What was once a niche deception has evolved into a wider spread strategy, reshaping the economics and trajectory-ethics of digital platforms. In such a bleak ‘post-authenticity’ internet, trust becomes a scarce resource; and something more likely to be continuously exploited…audiences become mere targets/subjects of behavioral prediction models…and ‘Influence’ becomes exponentially more detached from the human experience…and ouroboros eats us all. As it stands, the question is no longer whether fake influencers exist…they most certainly do. The real focus is whether the concept of an ‘influencer’ can survive in a system where authenticity is optional, and simulation is often more profitable (environmental-toll-notwithstanding).

None of this has to be a reality in which we live. We could all decide tomorrow to go back to flip-phones, or go on public-access and speak our mind…(as if those channels would compare) but we won’t because we’re moving forward faster than looking back. Nevertheless we would all benefit from a reminder that truth can and must exist within all of us individually and cumulatively, so we can better navigate the future in all its deceptive glory…or parlay the ouroboros metaphor: art imitates life, life imitates art…but humans make life, and humans make art, soooo in-turn we get a huge say in how to operate within that cycle. Ultimately, the need to aim to regulate extraction is key here; namely if profitability is moderated, it would give the consumerism surrounding it a chance to self-correct…thus forcing evolution into a more sustainable augmentative model.